By Jason Lackey, Network and Security Marketing, Keysight Technologies

Monitoring tools have always been critical for ensuring network performance and security. Top vendors have designed new tools specifically for monitoring networks with virtualization, cloud infrastructure, distributed data centers, and edge computing. Way back in 2016, Enterprise Management Associates found that 24% of enterprises used 6-10 monitoring tools and another 34% used 11 or more.[1] I did not find more recent research, but I think it’s safe to assume the number is growing. There are so many good tools available, as well as so many important reasons for monitoring, including to:

- Identify security threats

- Resolve performance issues faster

- Predict impact of changes

- Avoid disruption as you scale

More options, more tools

With all the options, organizations end up with tools developed in-house, tools obtained for free or via open source, and tools purchased from third-parties. New administrators bring on their favorite tools. The tendency is for organizations to add tools, rather than replace existing solutions. Sometimes this happens more than one team is using a tool and may not agree on a replacement. Other times it happens because there is no process for retiring tools or auditing usage.

Tool sprawl increases IT operating costs

Unfortunately, as the number of tools increases, so does the operational complexity and cost of managing tools. The costs may not be easily quantified, but they add up. For one thing, tools from different vendor tools run on different operating platforms and with different management interfaces (or ‘panes of glass’). It takes time for administrators to learn the tips and tricks of each tool and to switch between the interfaces during the work day.

Administrators may also have to integrate information from multiple tools to produce a meaningful result, such as when one tool identifies an anomaly and another isolates the root cause. Tool integration is frequently achieved through customized programming interfaces. IT must then spend time to support, maintain, and upgrade these interfaces—all of which adds to the cost of operations and overall complexity.

More monitoring activity also results in more alerts that require follow-up and investigation. This work is time-consuming and requires experienced administrators. An organization may not have the budget to increase their skilled resources. At some point, alerts may go without follow-up. If this happens, an organization can end up suffering a breach or disruption that was flagged and preventable, but was unresolved because of data overload and resource constraints.

Beware the popular answer to tool sprawl

Be careful when an analyst or industry commentator tells you the answer to tool sprawl is to deploy or build a unified monitoring platform that eliminates multiple interfaces and reduces complexity. In articles and blogs on the future of network monitoring, analysts and industry watchers frequently present the single pane of glass as a way to simplify multi-vendor tools management. The idea sounds logical and valuable, but is very hard to implement in a competitive marketplace. Similar proposals in other areas of IT have met with limited success. Companies often abandon the unification effort when it fails to deliver the expected results. You need a more practical strategy for managing tool sprawl—one that offers immediate results without relying on vendor adoption of standards or custom integration work.

Work smarter for more immediate results

One way to deal with the cost and complexity of network monitoring is to work smarter. If you collect the right data, zero in on issues more quickly, and reduce the workload on your tools, monitoring will be less costly and more accurate. Working smarter will help you manage complexity, not eliminate it.

First, be smarter about how you collect information from your network. When it comes to network monitoring, you need to make sure you see all the of network packets flowing through your enterprise, with no blind spots. Network packets provide details on the source, format, purpose, and destination of digital communications. Packets help your tools determine whether a performance problem is related to the underlying network or the application. Your tools should examine packets in every network segment to protect your network from security breaches and performance disruptions. A broad portfolio of multi-modal physical, virtual, and cloud-based network taps will give you access to packets from every part of your enterprise.

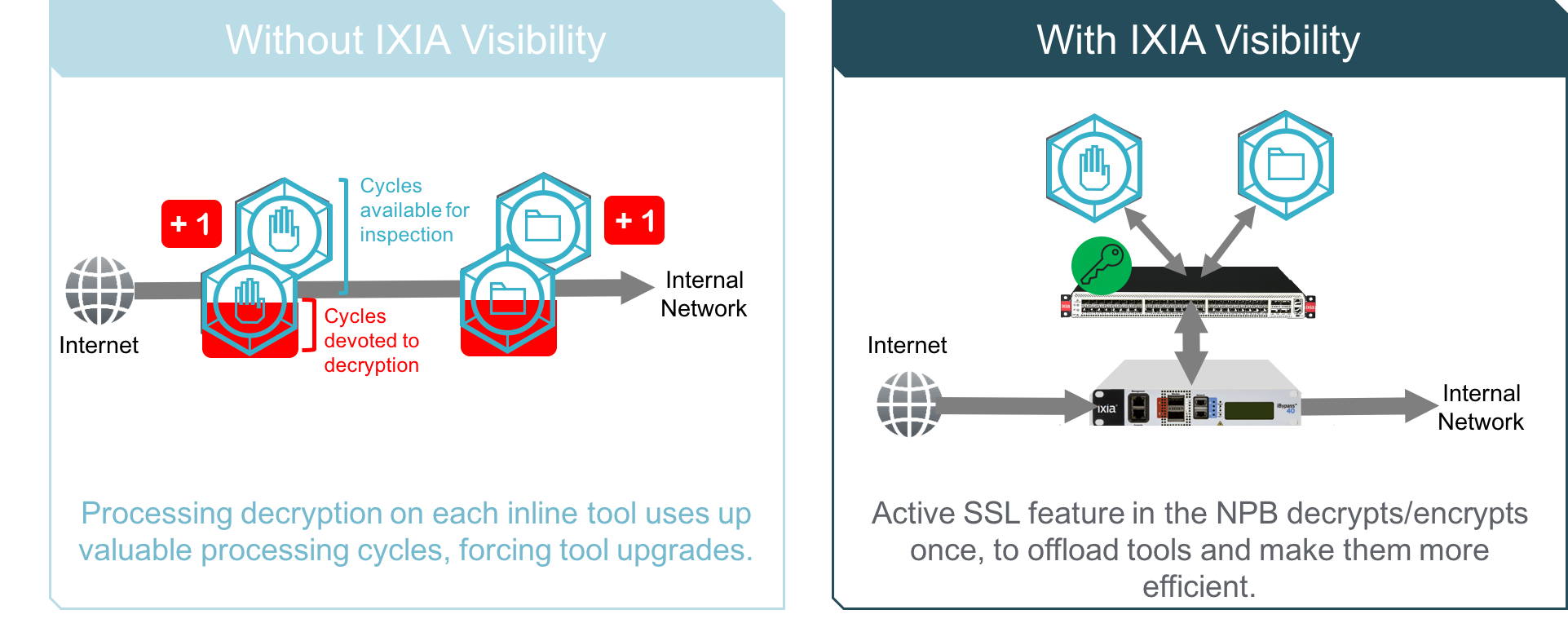

Be smarter about how you process data with your monitoring tools. Vendors don’t always tell you when you can offload tasks to simpler devices because it might cost them a tool upgrade. In fact, you can reserve more of a tool’s capacity for compute-intensive functions like analysis and forensics by offloading simpler tasks. A high-speed network packet broker (NPB) can perform tasks such as packet de-duplication, header stripping, NetFlow generation, and packet filtering with greater speed and at less cost. When you deploy a high-speed packet broker between your data collection devices and tools, you can reduce the burden on your tools and avoid tool upgrades as data volumes grow. Ixia’s Vision ONE NPB also offers active SSL decryption and re-encryption, so you don’t need to purchase a separate decryption device.

Finally, be smarter about the way you move data through your monitoring architecture. A NPB lets you control and customize the path data takes. Sending data from one tool to the next in a serial path increases latency and delays the resolution of issues or breaches. An NPB with drag-and-drop graphical user interface lets you easily control how data flows. You can take decrypted packets and send them to multiple security tools at the same time for parallel processing. Or you can specify only packets with certain characteristics and forward them for advanced analysis. NPBs give you control over data movement and delivery so you can increase monitoring efficiency.

SUMMARY

There are many good solutions for securing and optimizing your network and vendors are working to introduce even better ones. Tool sprawl will continue to be a challenge. You can, however, reduce the impact of tool sprawl on operating costs by increasing the efficiency of your monitoring architecture. Be sure you are collecting network packets from your entire network to give tools what they need. Network packets have the details your tools need to identify threats, anticipate performance issues, and isolate the root cause of disruptions. Offload as many processing tasks as you can from your tools to help them scale. Finally, use a control layer between your data collection devices and monitoring tools to quickly move data to where it needs to be.